![]() Darpa Wants To Know, And There's A Workshop Tomorrow.

Darpa Wants To Know, And There's A Workshop Tomorrow.

![]() The Subject Is Ready For Basic Research.

The Subject Is Ready For Basic Research.

![]() Short Term Applications May Be Feasible.

Short Term Applications May Be Feasible.

![]() Self-Awareness Is Mainly Applicable To Programs With

Persistent Existence.

Self-Awareness Is Mainly Applicable To Programs With

Persistent Existence.

![]() Easy aspects of state: battery level, memory available, etc.

Easy aspects of state: battery level, memory available, etc.

![]() Ongoing activities: serving users, driving a car

Ongoing activities: serving users, driving a car

![]() Knowledge and lack of knowledge

Knowledge and lack of knowledge

![]() purposes, intentions, hopes, fears, likes, dislikes

purposes, intentions, hopes, fears, likes, dislikes

![]() Actions it is free to choose among relative to external

constraints. That's where free will comes from.

Actions it is free to choose among relative to external

constraints. That's where free will comes from.

![]() Permanent aspects of mental state, e.g. long term goals,

beliefs,

Permanent aspects of mental state, e.g. long term goals,

beliefs,

![]() Episodic memory--only partial in humans, probably

absent in animals, but readily available in computer systems.

Episodic memory--only partial in humans, probably

absent in animals, but readily available in computer systems.

![]() Human self-awareness is weak but improves with age.

Human self-awareness is weak but improves with age.

![]() Five year old but not three year old. I used to think the

box contained candy because of the cover, but now I know it has

crayons. He will think it contans candy,

Five year old but not three year old. I used to think the

box contained candy because of the cover, but now I know it has

crayons. He will think it contans candy,

![]() Simple examples: I'm hungry, my left knee hurts from a

scrape, my right knee feels normal, my

right hand is making a fist.

Simple examples: I'm hungry, my left knee hurts from a

scrape, my right knee feels normal, my

right hand is making a fist.

![]() Intentions: I intend to have dinner, I intend to visit New

Zealand some day. I do not intend to die.

Intentions: I intend to have dinner, I intend to visit New

Zealand some day. I do not intend to die.

![]() I exist in time with a past and a future. Philosophers

argue a lot about what this means and how to represent it.

I exist in time with a past and a future. Philosophers

argue a lot about what this means and how to represent it.

![]() Permanent aspects of ones mind: I speak English and a

little French and Russian. I like hamburgers and caviar. I cannot

know my blood pressure without measuring it.

Permanent aspects of ones mind: I speak English and a

little French and Russian. I like hamburgers and caviar. I cannot

know my blood pressure without measuring it.

![]() What are my choices? (Free will is having choices.)

What are my choices? (Free will is having choices.)

![]() Habits: I know I often think of you. I often have

breakfast at the Pennsula Creamery.

Habits: I know I often think of you. I often have

breakfast at the Pennsula Creamery.

![]() Ongoing processes: I'm typing slides and also getting

hungry.

Ongoing processes: I'm typing slides and also getting

hungry.

![]() Juliet hoped there was enough poison in Romeo's vial to

kill her.

Juliet hoped there was enough poison in Romeo's vial to

kill her.

![]() More: fears, wants (sometimes simultaneous but

incompatible)

More: fears, wants (sometimes simultaneous but

incompatible)

![]() Permanent compared with instantaneous wants.

Permanent compared with instantaneous wants.

![]() consider

consider

![]() Infer

Infer

![]() decide

decide

![]() choose to believe

choose to believe

![]() remember

remember

![]() forget

forget

![]() realize

realize

![]() ignore

ignore

![]() Easy self-awareness: battery state, memory left

Easy self-awareness: battery state, memory left

![]() Straightorward s-a: the program itself, the programming

language specs, the machine specs.

Straightorward s-a: the program itself, the programming

language specs, the machine specs.

![]() Self-simulation: Any given number of steps,

can't do in general ``Will I ever stop?'', ``Will I

stop in less than

Self-simulation: Any given number of steps,

can't do in general ``Will I ever stop?'', ``Will I

stop in less than ![]() steps in general--in less than

steps in general--in less than ![]() steps.

steps.

![]() Its choices and their inferred consequences

(free will).

Its choices and their inferred consequences

(free will).

![]() ``I hope it won't rain tomorrow''. Should a machine hope

and be aware that it hopes? I think it should sometimes.

``I hope it won't rain tomorrow''. Should a machine hope

and be aware that it hopes? I think it should sometimes.

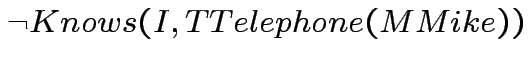

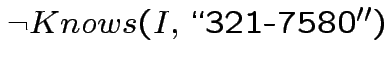

![]()

, so I'll have to look it

up.

, so I'll have to look it

up.

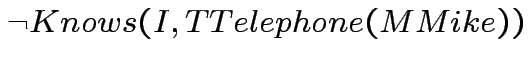

We had

, and I'll have to look it

up.

, and I'll have to look it

up.

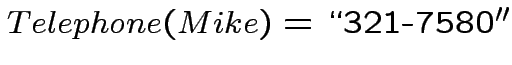

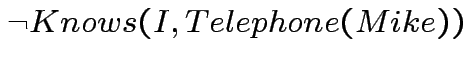

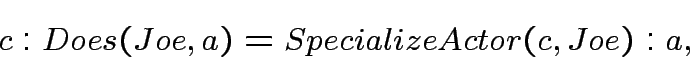

Suppose

. If we write

. If we write

, then substitution would give

, then substitution would give

, which doesn't make sense.

, which doesn't make sense.

There are various proposals for getting around this. The most advocated is some form of modal logic. My proposal is to regard individual concepts as objects, and represent them by different symbols, e.g. doubling the first letter.

There's more about why this is a good idea in my ``First order theories of individual concepts and propositions''

The main application of contexts as objects is to assert relations

between the objects denoted by different expressions in different

contexts. Thus we have

Such relations between expressions in different contexts allows using a situation calculus theory in which the actor is not explicitly represented in an outer context in which there is more than one actor.

We also need to express the relation between an external context in which we refer to the knowledge and awareness of AutoCar1 and AutoCar1's internal context in which it can use ``I''.

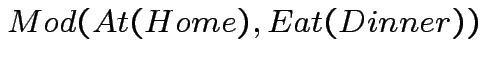

Pat is aware of his intention to eat dinner at home.

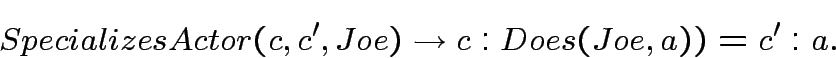

is a context.

is a context.  denotes

the general act of eating dinner, logically different from

eating

denotes

the general act of eating dinner, logically different from

eating ![]() .

.

is what you get when

you apply the modifier ``at home'' to the act of eating dinner.

is what you get when

you apply the modifier ``at home'' to the act of eating dinner.

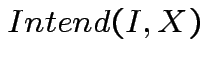

says that I

intend

says that I

intend ![]() . The use of

. The use of ![]() is appropriate within the context of a

person's (here Pat's) awareness.

is appropriate within the context of a

person's (here Pat's) awareness.

We should extend this to say that Pat will eat dinner at home unless his intention changes. This can be expressed by formulas like

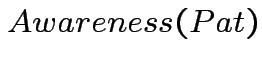

![]() AutoCar1 is driving John from Office to Home. AutoCar1 is

aware of this. Autocar1 becomes aware that it is low on hydrogen.

AutoCar1 is permanently aware that it must ask permission to stop

for gas, so it asks for permission. Etc., Etc. These facts are

expressed in a context

AutoCar1 is driving John from Office to Home. AutoCar1 is

aware of this. Autocar1 becomes aware that it is low on hydrogen.

AutoCar1 is permanently aware that it must ask permission to stop

for gas, so it asks for permission. Etc., Etc. These facts are

expressed in a context ![]() .

.

![\begin{displaymath}

\begin{array}[l]{l}

C0: \\

Driving(I,John,Home1) \\

\land...

...sBecomes(Want(I,SStopAt(GGasStation1))) \\

\land

\end{array}\end{displaymath}](img24.png) |

(3) |

![]() Does the lunar explorer require self-awareness? What about

the entries in the recent DARPA contest?

Does the lunar explorer require self-awareness? What about

the entries in the recent DARPA contest?

![]() Do self-aware reasoning systems require dealing with

referential opacity? What about explicit contexts?

Do self-aware reasoning systems require dealing with

referential opacity? What about explicit contexts?

![]() Where does tracing and journaling involve self-awareness?

Where does tracing and journaling involve self-awareness?

![]() Does an online tutoring program (for example, a program that

teaches a student Chemistry) need to be self aware?

Does an online tutoring program (for example, a program that

teaches a student Chemistry) need to be self aware?

![]() What is the simplest self-aware system?

What is the simplest self-aware system?

![]() Does self-awareness always involve self-monitoring?

Does self-awareness always involve self-monitoring?

![]() In what ways does self-awareness differ from awareness of

other agents? Does it require special forms of representation or

architecture?

In what ways does self-awareness differ from awareness of

other agents? Does it require special forms of representation or

architecture?

Some Philosophical Problems from the Standpoint of Artificial Intelligence

John McCarthy and Patrick J. Hayes

Machine Intelligence 4, 1969

also http://www-formal.stanford.edu/jmc/mcchay69.html

Actions and other events in situation calculus

John McCarthy

KR2002

also http://www-formal.stanford.edu/jmc/sitcalc.html.